Multivariate Statistical Analysis (10/10): I led this report, which presents a multivariate statistical analysis of survey data from 1,100 university students to deconstruct the complex, interconnected factors contributing to student stress. By employing techniques such as Principal Component Analysis and Confirmatory Factor Analysis, the researchers identified and validated a three-factor structure comprising psychological distress, academic pressure, and environmental support. Cluster analysis further segmented the student population into distinct "Thriving," "Moderate," and "High Risk" profiles, while Market Basket Analysis revealed that the combination of poor sleep and low self-esteem significantly predicts high stress. Ultimately, the study argues against one-size-fits-all solutions, advocating instead for data-driven, targeted interventions to address the specific needs of vulnerable student segments.

Probability and Inference Game Using the Newsvendor Problem (10/10): I applied theoretical concepts from the Newsvendor Problem (NVP) to optimize order quantities for a European bakery chain. Using real-world data, I modeled profit functions while incorporating holding costs, and derived the optimal order quantities using parametric and non-parametric methods. Through Monte Carlo simulations, I compared the performance of these methods and analyzed the strengths and weaknesses of each. The results indicated that parametric methods outperformed non-parametric ones when the underlying demand distribution was correctly specified. Additionally, I performed a detailed time series analysis on bakery demand data to identify trends and patterns, especially focusing on the impact of weekends on demand variability.

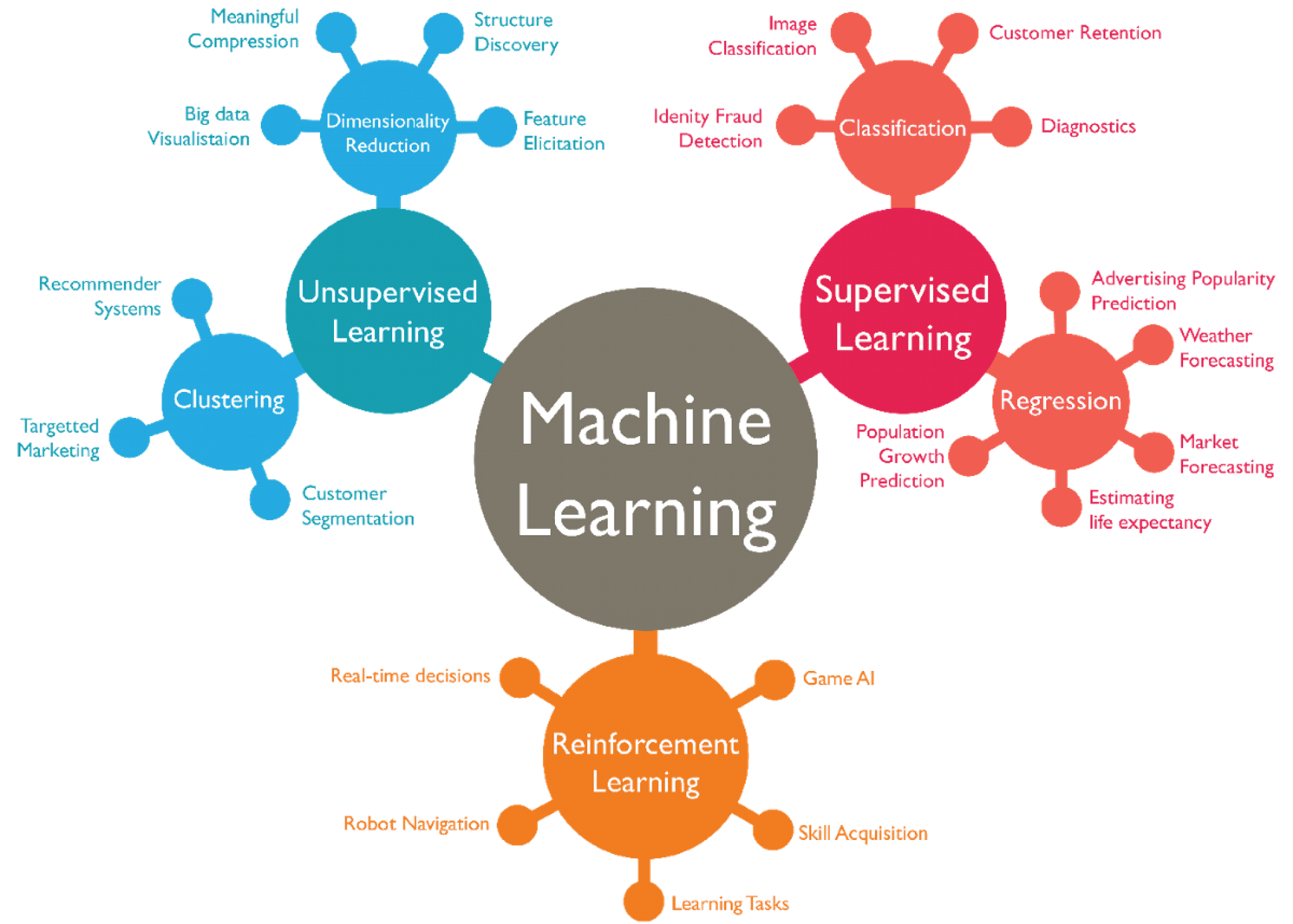

Classifying Financial News Articles Using Machine Learning (9.5/10): I worked on classifying financial news articles from the Reuters-21578 dataset, specifically identifying articles related to crude oil. The project involved pre-processing textual data through tokenization, stemming, and the removal of stop words. Using a Term Frequency-Inverse Document Frequency (TF-IDF) matrix and Latent Semantic Analysis (LSA) for dimensionality reduction, I built and compared several machine learning classifiers, including Logistic Regression, k-Nearest Neighbors (k-NN), and Naive Bayes (NB). I also implemented the AdaBoost algorithm to boost the performance of NB classifiers. The results showed that Logistic Regression achieved the highest recall, while k-NN outperformed other models in precision. This project provided valuable insights into document classification techniques in natural language processing and their application to financial data.

Factor Analysis of Musical Attributes Across 50 Artists (8.5/10): This project involved constructing an orthogonal factor model (OFM) to uncover underlying patterns within a dataset of musical attributes, including energy, danceability, and popularity. By applying a VARIMAX rotation, I identified three key factors—calmness, non-danceability, and studio production quality. These factors provided insights into genre distinctions, artist positioning, and the relative influence of different musical characteristics. The analysis was further visualized by plotting artists on an energy-danceability spectrum, offering valuable information for music industry stakeholders and recommendation systems.

Stochastic Volatility and CAPM with Time-Varying Beta (8.5/10): This project involved modeling volatility dynamics for the S&P 500 using a Stochastic Volatility (SV) model and comparing it to traditional GARCH models. The SV model demonstrated high persistence and volatility clustering in financial time series, providing valuable insights for risk management. The project also incorporated a dynamic version of the Capital Asset Pricing Model (CAPM) with time-varying beta to capture evolving market risks for Microsoft, Bank of America, and Exxon Mobil. By employing Maximum Likelihood Estimation (MLE), I assessed changes in market exposure over time and explored the benefits of dynamic risk modeling for portfolio management.

Ethics of Supplying Drugs for Lethal Injections: A Moral Dilemma (8.5/10): In this essay, I explored the ethical considerations surrounding pharmaceutical companies supplying drugs for lethal injections used in executions in the United States. The analysis drew from various ethical frameworks, including utilitarianism, Kantian ethics, and Aristotelian perspectives, to examine whether it is morally justifiable for pharmaceutical companies to provide drugs for executions. The essay highlighted the conflict between the human right to life and the government's effort to make executions as humane as possible. The ethical dilemma was further complicated by the potential consequences of refusing to supply drugs, which could lead to less humane alternative execution methods. Ultimately, the essay provided a nuanced evaluation of this complex ethical issue from multiple moral viewpoints.

Time Series Analysis (10/10) and Vector Error Correction Models (8/10): In these two projects, I conducted a detailed time series analysis of macroeconomic indicators, focusing on French and Euro area data. The first project involved modeling GDP growth, inflation, and interest rates for France using vector autoregressive (VAR) models, performing hypothesis tests, and assessing model stability. The second project expanded on this work by analyzing Euro area time series data, incorporating housing wealth and exploring cointegration relationships using Vector Error Correction Models (VECMs). I applied advanced statistical methods to uncover long-term equilibrium dynamics and short-term adjustment processes.

Volatility and Risk Forecasting Using GARCH and GAS Models (8/10): I developed models to predict the volatility and risk measures of daily returns for financial assets, including a stock (ASML), a cryptocurrency (Bitcoin), and an exchange-traded fund (S&P 500 ETF). Using both GARCH and Generalized Autoregressive Score (GAS) models, I generated 1-day-ahead forecasts for volatility and conducted backtesting to assess the accuracy of these models in predicting Value at Risk (VaR) and Expected Shortfall (ES). The analysis revealed that GARCH models performed well for low-volatility data like the S&P 500, while GAS and HARCH models better captured the volatility dynamics of highly volatile assets like Bitcoin. This project provided insights into the relative strengths of GARCH and GAS models for financial risk management across different asset classes.

Predicting Law Graduate Salaries Using OLS and k-NN Regression (8/10): I developed and compared regression models to predict the starting salaries of law graduates based on factors such as law school rank, LSAT scores, GPA, and the cost of education. Using Ordinary Least Squares (OLS) and k-Nearest Neighbor (k-NN) regression, I analyzed the relationship between law school prestige and graduate salaries. The project also incorporated Monte Carlo simulations to assess uncertainties in the results. Ultimately, the KNN model outperformed OLS in several instances, demonstrating better predictive accuracy for this dataset. This analysis provided valuable insights into the factors influencing graduate earnings and the benefits of non-parametric methods like KNN in regression analysis.

Bootstrap Methods for Time Series Analysis (8/10): I explored the performance of various bootstrap methods in time series analysis, particularly focusing on heteroscedasticity in data. Through an in-depth comparison of methods such as the residual bootstrap, wild bootstrap (with both normal and Rademacher distributions), and pairs bootstrap, I evaluated their effectiveness in hypothesis testing, particularly under heteroscedastic conditions. Results indicated that the wild bootstrap with Rademacher distribution consistently provided the most accurate results. This project offered valuable insights into the reliability of bootstrap methods in econometric models, particularly when dealing with time series data.

Modeling Volatility in the German Stock Market (8/10): I analyzed the volatility dynamics of the German stock market, focusing on the DAX index using GARCH and GJR-GARCH models. The goal was to identify patterns of volatility clustering and assess risk using various Value-at-Risk (VaR) measures. By comparing multiple GARCH models (GARCH(1,1), GARCH(2,1), and GARCH(2,2)), I identified GARCH(1,1) as the most suitable model. The project further involved portfolio management using multivariate GARCH models, where I estimated optimal portfolio weights for DAX and HSI stocks under different market conditions. Additionally, I explored time-varying conditional correlations using the CCC model and evaluated how model choice impacts risk assessment and investment strategies.

.jpg)